What is a Large Language Model (LLM)?

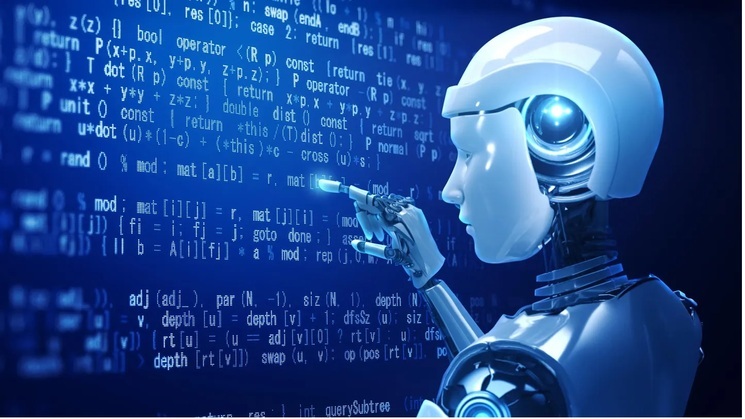

Large Language Model is an advanced Artificial intelligence based program or software that can process, understand, and generate human-like text, image, and or video. LLMs are built on deep networks. They are typically trained on huge data sets – billions of data points to recognize the input instructions and generate the output.

LLMs use a type of machine learning process, called deep learning, to understand the functioning of characters, words, sentences, pixels, hue combinations, etc. Deep learning primarily involves the probabilistic analysis of raw and unstructured data, which enables the deep learning model to understand and solve the puzzling pieces without human mediation.

Working of Large Language Models:

Fundamentally, LLMs are designed on machine learning, where programs are fed by large amounts of data sets in order to train and perform tasks without human intervention.

The machine learning or deep learning-based LLMs are built on neural networks. As we know, a human brain is constructed with millions of interconnected neurons, which send signals to each other, and similarly, a neural network is constructed of network nodes that connect with each other. The neural network incorporates several layers. Each layer has a defined threshold value; once the output of any layer crosses that threshold, then only certain information passes to the other layer.

Neural networks are categorized based on their architecture. One of the popular types is the transformer model. Transformer models use a mathematical technique to correlate sequences, called “self-attention”, which better understands the context. By using this LLM can understand the vague voice or sentences. It is a kind of auto-correct method in machine learning.

Generative AI

What are the uses of LLM?

An LLM can be trained to do several tasks. At present, the most popular application of LLM is generative AI. Publicly available generative AIs are ChatGPT, Gemini, Grok, Claude, etc. Some of the LLMs can be used in research, as Chatbots, online searches, etc. For example, you can use Grok to perform multiple tasks, such as writing a poem, finding bugs, and fixing them in programs.

In the healthcare sector, LLMs are helping to analyze textual data related to molecular biology. LLMs are also being used as Chatbots for patients to diagnose basic health conditions.

In financial sectors, LLMs are getting used to assist customer communication and detect fraudulent activity.

In addition to these, LLMs can detect and correct the errors in your questions that you are going to ask. In my opinion, LLMs are an encyclopedia of the internet. At present, tech is still evolving to solve and improve the behavioral analysis of the LLMs.

Limitations of LLM:

Large Language models are designed to solve and improve human life with their trained ability. But this machine code has several limitations. Some of them are given below:

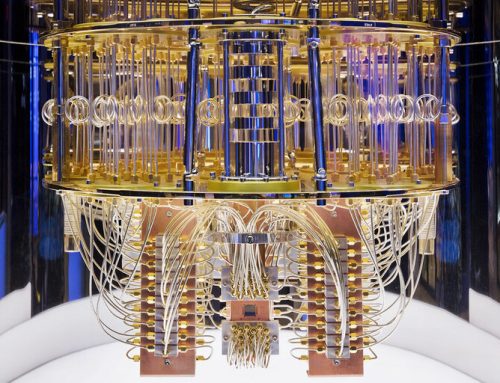

- Computational issues: LLM models are limited by a fixed number of threads or tokens they can process concurrently. This constraint puts LLM to operate within its computational boundaries. On the positive side, this limitation helps LLM to function smoothly.

- Incorrect Interpretation: Large Language Models are prone to incorrect interpretation of input data, where they produce delusive output. These incorrect interpretations may be caused by various reasons. The primary reason for this is related to the training of the LLM. If an LLM was trained by old data, then it can hallucinate or misinterpret emotional and sometimes logical nuances.

- Limited Knowledge Base: Most of the LLMs are running with a limited knowledge base because they have an inability to acquire new information over the internet. The training data is structured and refined specifically to build a learning module for LLM, but the data those are present over the internet is complex and huge. It makes it difficult to capture and update the info in the LLM database automatically. Whereas some of the AI companies are claiming that their LLM model is learning and training its knowledge base automatically.

- Long-term Memory Issue: LLMs need large data centers to process, interpret, retain, and release the output. Large Language Models treat each user conversation or assigned task as a standalone process. It decreases the capability to retain information across different user sessions. Each conversation or task is processed in an isolated memory segment, which causes discontinuity in the conversation.

- Hurdle in Understanding of Complex Reasoning: Large Language Model faces an inability to understand complex reasoning that requires understanding beyond literal meaning. This limitation is even observed in advanced Transformer models.

Future of LLM:

In the upcoming years, LLMs will get many improvements. Some of them are listed below:

- Real-time Info check

- Self-learning capability

- Use a specific Neural network instead of all for every input

- Understand complex reasoning

- Domain-specific fine-tuning in models

Thanks for reading. See you soon with another exploration!